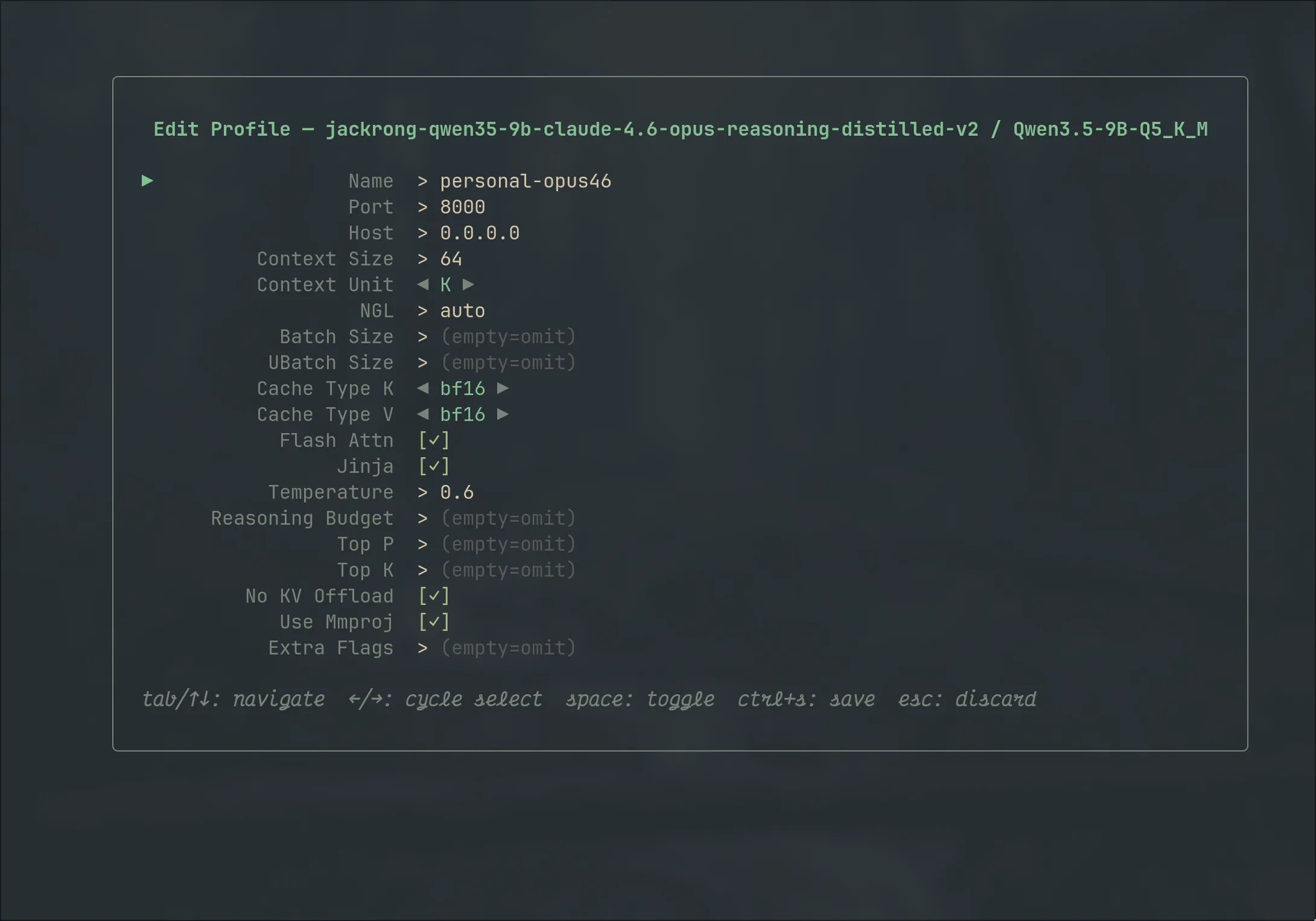

Configure once,

launch forever

Name your profile, set context size, GPU layers, batch parameters, flash attention, quantization type. Save it once and relaunch in seconds.

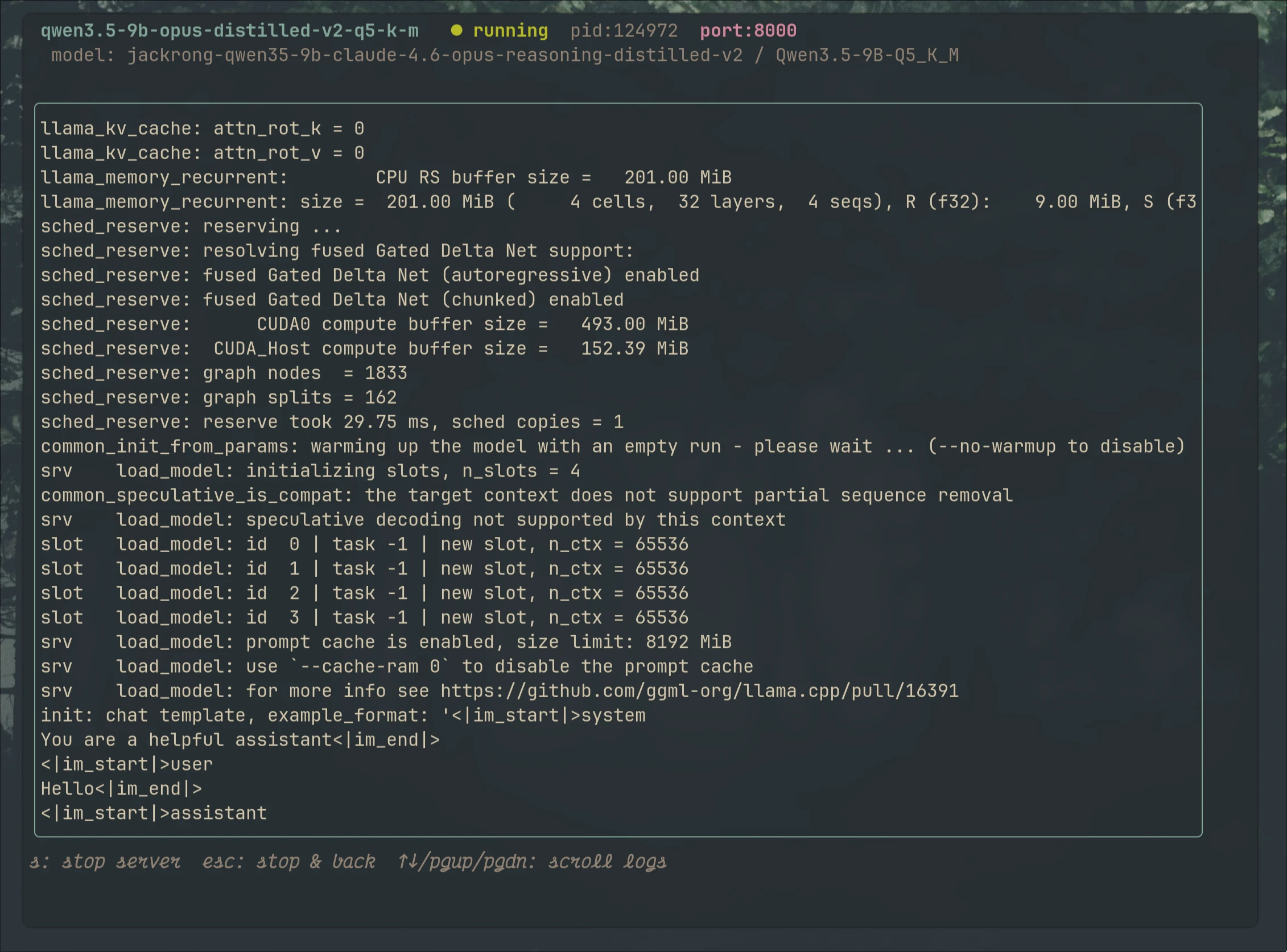

Watch inference

happen live

Built-in viewport streams logs plus system/model metrics in real-time. Stop, restart, or switch models without leaving the TUI.